Teaching Assistant & Mentor

Guided first-year students through engineering fundamentals, gaining insights into learning challenges that now inform my educational technology work.

> glass_scholar --status active

Fourth-year Computer Science student at NYU Tandon, GLASS Scholar since 2023. Through leadership, global experiences, and technical innovation, I'm working to democratize quality education worldwide.

// about

Growing up as a first-generation student, I experienced firsthand how access to quality education can transform lives—and how its absence can limit potential. This understanding has driven every decision in my academic journey.

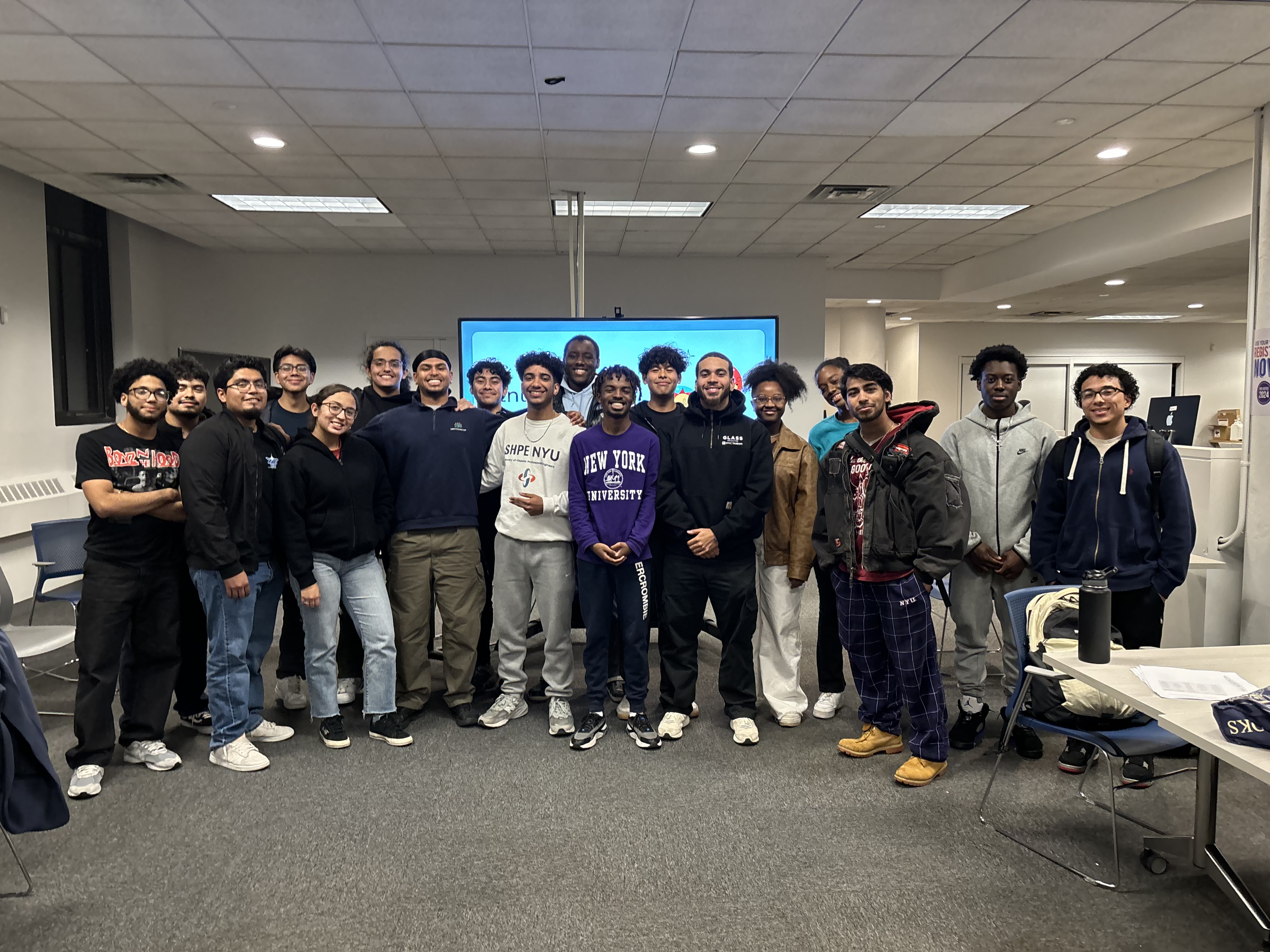

Through GLASS, I've channeled this passion into meaningful action: from guiding first-year engineering students as a Teaching Assistant, to founding mentorship programs that support underrepresented students in tech, to immersing myself in global perspectives through study abroad experiences in Abu Dhabi and Seoul.

Each experience has reinforced my belief that technology—particularly AI and machine learning—can be powerful equalizers. My mission is to build tools that adapt to how different students learn, breaking down barriers of geography, language, and cultural context. I want to create a world where your zip code doesn't determine your access to quality education.

"AI and machine learning are powerful tools to bridge global educational gaps. My mission is to create a world where quality education is accessible to all, regardless of geography or background."

// program

Study abroad funding and international experiences

Conferences, development, and research funding

Family of driven students pushing each other higher

Transforming engineers into global changemakers

// framework

Guided first-year students through engineering fundamentals, gaining insights into learning challenges that now inform my educational technology work.

Led efforts to increase representation for underrepresented students in CS, creating spaces where students could see themselves succeeding in tech.

Two semesters immersed in Middle Eastern culture while studying AI. Transformed how I think about building culturally-aware technology.

Three weeks studying how South Korea balances tech advancement with sustainability. Learned to implement solutions across cultural boundaries.

Built Q-quake—an earthquake detection system using quantum computing. Three intense days proving breakthrough solutions come from diverse perspectives.

Built real projects for global organizations while developing intercultural skills. Learned to translate technical solutions across cultures.

Mangrove restoration, turtle conservation, and rural school support. Saw firsthand how education is key to addressing environmental and social challenges.

Additional service experience to be added as my GLASS journey continues.

Built AI-powered solutions for government applications. Developed scalable ML pipelines and gained experience in responsible AI development.

Networked with professionals pioneering AI and ML. Saw Hispanic engineers thriving in tech—proof there's space for me in this field.

// timeline

// senior_project

I built two AI-powered educational tools that adapt to how different students learn: a CS Learning Platform that generates personalized code examples tailored to student interests, and an intelligent Q&A system for NYU's Intro to Engineering course using retrieval-augmented generation. Both are responsive to cultural contexts, language barriers, and resource constraints.

These tools demonstrate that technology can democratize education without erasing cultural identity—recognizing students learn differently based on context, not ability.

AI that adapts to learning styles and recognizes engagement patterns across backgrounds.

VR labs and AR explanations making immersive learning borderless and accessible.

Infrastructure reaching remote areas with stable connections on limited bandwidth.

// final_paper

Quality education should not depend on where you are born or how much money your family has. Yet hundreds of millions of students worldwide lack access to the kind of personalized support that makes learning actually work. This paper examines two AI-powered tools I developed to address different pieces of this problem. The first is a Computer Science learning platform that generates code examples tailored to what individual students care about. Instead of teaching everyone the same way, it adapts. A student interested in music gets examples about playlist manipulation. A student passionate about climate gets examples analyzing environmental data. The concept stays the same, but the entry point changes. The second is a question-and-answer system for NYU's Introduction to Engineering course that gives students instant, accurate answers based on actual course materials while helping instructors see where students struggle most. Both projects are in active development. I am currently conducting surveys, focus groups, and research to understand what students actually need as I continue building. This paper documents the technical design of both systems, examines what they could realistically do for educational access, and honestly addresses their limitations. Technology alone will not solve educational inequality. But tools designed thoughtfully, tested carefully, and deployed with equity in mind can expand access to quality learning for students who currently get left behind.

// contact